🌟 Editor's Note

Welcome to another exciting week in the Vision AI ecosystem! We've got a packed newsletter full of insights, events, and inspiring stories from the heart of innovation.

🗓️ Tool Spotlight

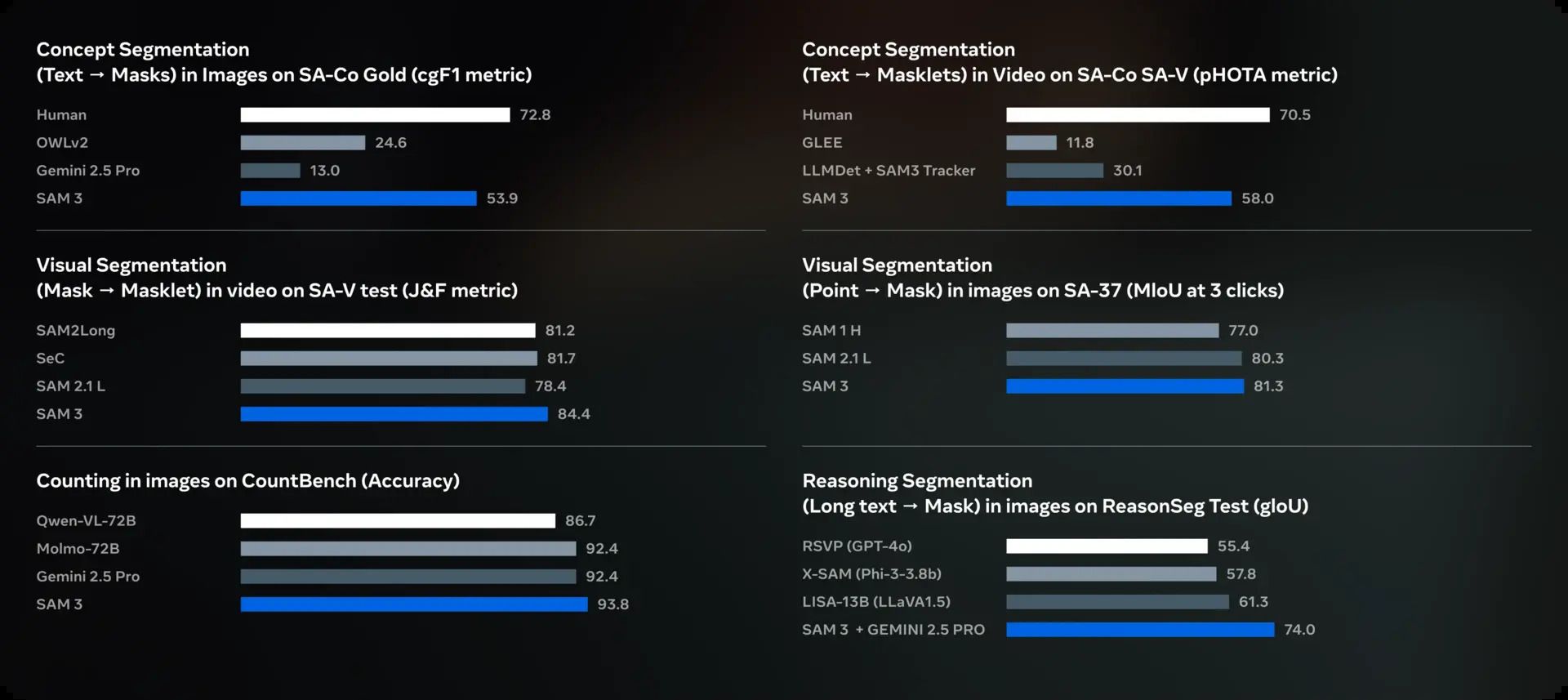

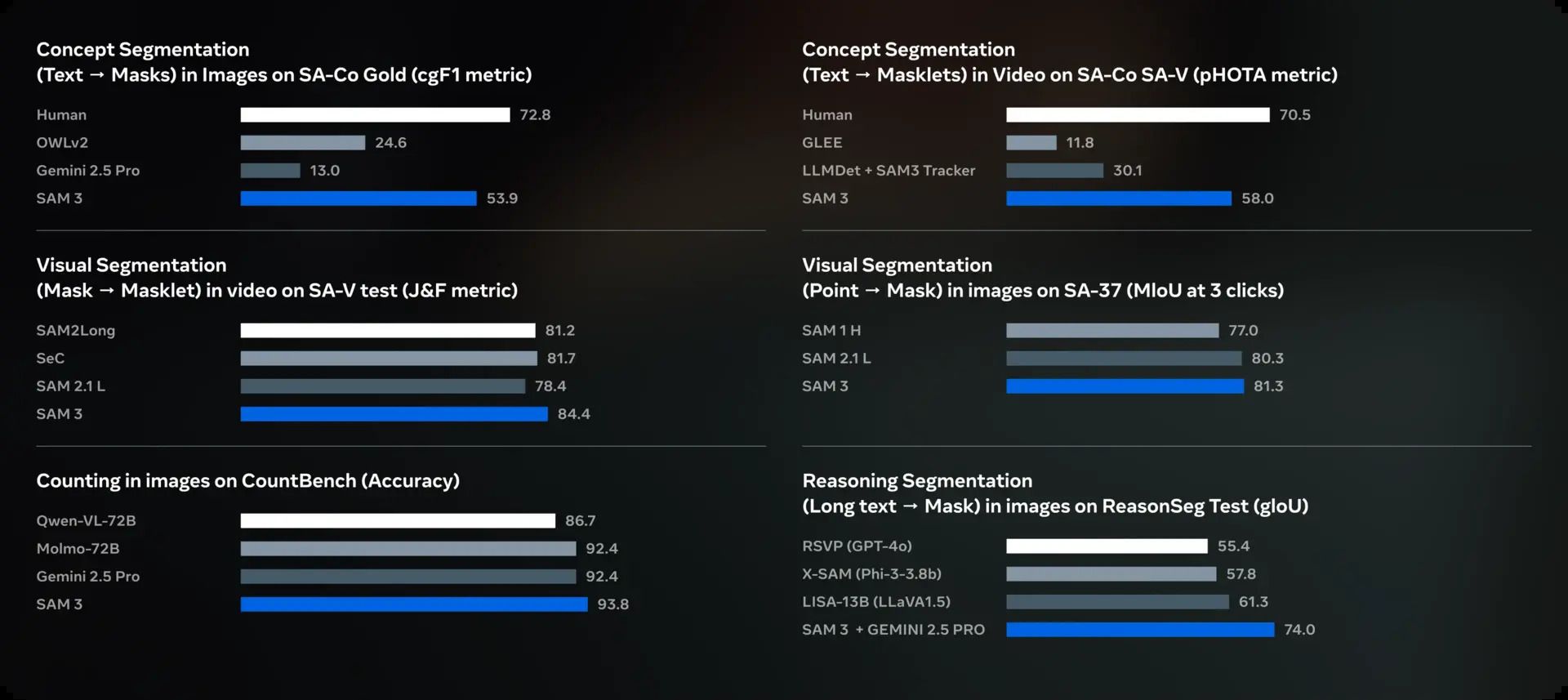

Meta releases SAM 3 :

SAM 3 is the newest version of the “Segment Anything” model by Meta (released Nov 2025). It is a unified vision model (≈ 848 M parameters) that allows you to detect, segment and track objects in images and videos — not only via clicks/boxes (like prior versions) but also via natural-language text prompts or example images (“exemplar prompts”).

Architecturally, SAM 3 separates recognition (does this object match the prompt?) from localization (where is it and what’s its mask), using a detector + tracker backbone.

Because of its flexibility, speed (real-time on capable hardware), and open-vocabulary support, SAM 3 is positioned as a general-purpose segmentation engine — useful for applications like image/video editing, annotation pipelines, AR/VR, content-creation, and more. [link]

🚀 Blog Spotlight

Finding the Flaw in 3D

A recent article provides a hands-on comparison of three 3D anomaly detection pipelines: MulSen-AD, CMDIAD, and Point-AD.

MulSen-AD is a thorough, multi-sensor fusion framework ideal for finding all defect types, but it is penalized by heavy I/O and slow performance. CMDIAD uses cross-modal distillation for fast, efficient detection, requiring excellent data calibration to prevent errors. Point-AD achieves powerful zero-shot detection on novel objects by leveraging CLIP, though its complex rendering and systems integration setup is a major hurdle.

The author concludes that the "best" method depends entirely on the use case: MulSen-AD for robust R&D, CMDIAD for speed, and Point-AD for maximizing object variety. [link]

🦄 Startup Spotlight

.Makrr.ai is a no-code computer vision startup that transforms any camera into a smart sensor for real-time video analysis.

Users can easily connect existing cameras or video streams and train the AI by simply tagging objects, people, or defects. The platform automates machine learning model creation, providing instant insights, smart alerts, and compliance reports.

Makrr's core value is offering industries a fast, efficient way to monitor operations, detect anomalies, and enhance efficiency without requiring any coding or a dedicated machine learning team.

🔥 Paper to Factory

A Comparative Analysis of Standard and Lightweight 3D U-Net and 3D DeepLabV3+ for Brain Tumor Segmentation

This study compared standard and lightweight 3D U-Net and DeepLabV3+ models for brain tumor segmentation from MRI.

While standard U-Net was best for the Whole Tumor (WT) Dice, standard DeepLabV3+ proved statistically superior for complex sub-regions (Tumor Core and Enhancing Tumor).

Importantly, the simplified lightweight models achieved a 70% parameter reduction and 40% speedup with only a minor performance drop, confirming their viability for clinical settings with computational constraints. [link]

🏆 Community Spotlight:

In their latest blog, Ultralytics reflect on their experience in Web Summit 2025 at Lisbon, Portugal

AgriNovus Indiana’s latest Agbioscience podcast highlights on how FloVision solutions create AI-driven solutions to help the food industry become more financially sustainable

Till next time,